A couple of weeks ago we recorded the highest number of questions answered – 542

Yesterday we answered the most in a single day – 136

I feel we’re doing something right and it also demonstrates the need for such a service…

A couple of weeks ago we recorded the highest number of questions answered – 542

Yesterday we answered the most in a single day – 136

I feel we’re doing something right and it also demonstrates the need for such a service…

Clinical uncertainty is often discussed in abstract terms — gaps in evidence, unmet research needs, or variation in practice. But a more revealing perspective comes from looking at what clinicians actually choose to read.

When we examined a recent group of the most-viewed clinical questions on our site, a clear picture emerged. These were not obscure academic debates. They were practical, sometimes uncomfortable uncertainties that many clinicians appear to share.

The most striking feature was that high-interest topics tended to appear in clusters rather than as isolated curiosities.

Several of the most-viewed questions focused on digital tools to improve medication adherence in adolescents. These did not simply ask whether such interventions are effective. They explored which approaches work best and what barriers prevent successful implementation. This suggests clinicians are moving beyond curiosity about digital health towards the harder question of how to make it work in real life.

Another group of widely read questions centred on complex diagnostic scenarios — patients with neurological symptoms, fever or unusual exposures. These are the moments when medicine becomes less about guidelines and more about judgement. The level of interest these questions attract is a reminder that uncertainty at the point of diagnosis remains one of the profession’s greatest challenges.

There was also strong engagement with questions about clinical processes and protocols, particularly in paediatric and critical care settings. Issues such as sedation weaning, transfusion reactions and pre-operative fasting may appear routine, but they carry significant safety implications. The popularity of these topics suggests clinicians are acutely aware that getting the details wrong can have serious consequences.

Some of the most-viewed questions revisited established procedures, such as arthroscopic lavage for osteoarthritis or the management of infected prostheses. These reflect a profession that is increasingly willing to question traditional practices in the light of evolving evidence.

Perhaps most tellingly, several high-interest topics extended beyond conventional biomedical decision-making. Questions about lifestyle influences, behavioural development and service innovations such as emergency department redirection hint at a broader shift in clinical thinking. Modern healthcare uncertainty is no longer confined to diagnosis and drug therapy. It increasingly includes systems, behaviours and patient expectations.

Looking at the strength of evidence behind these popular questions reveals a further, slightly uncomfortable truth.

Where the evidence base is relatively strong, clinicians are often still searching — not for answers about effectiveness, but for guidance on how to implement evidence safely and consistently. Questions about digital adherence interventions, procedural protocols and changing treatment pathways fall into this category. The challenge is not discovering what works, but applying it in complex real-world environments.

By contrast, the questions linked to more limited or moderate evidence often involve diagnostic ambiguity, rare clinical scenarios or organisational change. These are situations where clinicians cannot simply follow a recommendation. They must interpret incomplete information and make decisions under uncertainty.

In other words, stronger evidence does not remove doubt. It shifts the nature of clinical curiosity — from “does this work?” to “how do I use this in practice?”

The fact that these questions attract the most attention should make us pause. They represent collective uncertainty, not isolated gaps in knowledge. They highlight the everyday tensions clinicians face between evidence, experience and system pressures.

If we want decision-support tools and evidence resources to remain relevant, we need to recognise this reality. Clinicians are not only looking for definitive answers. They are looking for help navigating the messy, evolving landscape of modern healthcare.

Understanding what clinicians choose to read may therefore tell us more about the future of evidence-based practice than any guideline or research agenda.

Before AskTrip officially launched, we were fortunate to have a fantastic group of clinicians and information specialists who volunteered to beta test the system. Their feedback was invaluable in helping us identify problems, refine features, and improve the overall experience.

Now, nine months on, we’re preparing the next phase of development – and we’d love to recruit a new group of volunteer testers to help us put a series of upcoming changes through their paces.

Many of these improvements come directly from user feedback. Others reflect things we’ve learned from analysing real-world questions and usage patterns. Together, we believe they represent a significant step forward for AskTrip, but we need your help to make sure we get them right.

We expect testing to take place in stages.

We’re making some substantial changes, and asking users to test everything at once could be overwhelming. It also risks more subtle issues being missed. Instead, we plan to introduce updates in phases so testers can focus on specific features and give more targeted feedback.

The first stage will focus on new work designed to reduce intent drift and avoid what we’ve previously described as “EBM wallpaper” (see this blog post for a fuller explanation).

Later stages are likely to include testing:

Overall, we anticipate up to three testing phases.

Taking part won’t be onerous. We’ll simply ask you to use the system as you normally would and share your impressions. This might include:

We also hope there’ll be an element of fun in being among the first to try new features — and in helping shape a tool designed to support evidence-based clinical decisions.

If you’d like to be involved, please get in touch (email: jon.brassey@tripdatabase.com)

We’d be delighted to have you help us shape the next evolution of AskTrip.

Last week marked a milestone for AskTrip; for the first time, we answered more than 500 clinical questions in a single week, reaching a new high of 542 questions answered.

Interestingly, the week began and ended with questions linked by a common theme – pain – yet illustrating the remarkable breadth of issues clinicians bring to AskTrip.

The first question of the week asked: What adverse effects might occur when carbamazepine and oxycodone are co-administered for pain management?

Here, the focus was on drug safety and interaction risk — a complex prescribing scenario involving multimorbidity, polypharmacy, and the need to balance analgesia with potential harms.

The final question of the week took us into a very different evidence space: What is the effectiveness of adding manual therapy to exercise therapy in reducing pain and disability in adults with chronic non-specific low back pain?

This reflects the non-pharmacological management of pain, where clinicians seek clarity on the value of physical and rehabilitative interventions supported by trials and systematic reviews.

Together, these two questions neatly capture what AskTrip is becoming known for – rapid, evidence-based answers across the full spectrum of clinical uncertainty. From medication safety to rehabilitation strategies, from individual prescribing decisions to broader questions of effectiveness, the diversity of questions continues to grow.

Surpassing 500 answers in a week is more than just a number. It reflects increasing trust from clinicians, expanding use at the point of care, and a widening recognition that high-quality evidence can, and should, be easier to access.

If this record week is any indication, the demand for fast, reliable clinical answers is only going in one direction.

Recently we had a brief problem on Trip where the site became unstable and temporarily crashed. What followed turned into an interesting example of how AI can help diagnose tricky technical issues.

The problem started when we noticed that some of our servers were repeatedly failing. At first, the cause wasn’t obvious. The system had been running smoothly, and the usual monitoring tools didn’t clearly show what was going wrong.

One of our developers downloaded the detailed system logs and tried something a little different. Instead of manually combing through thousands of lines of information, he asked Claude (an AI system) to analyse the logs and the relevant code.

Claude suggested a possible explanation: Under certain circumstances, the software could accidentally try to send two replies to the same request.

In web systems, each request must receive exactly one response. Once the system sends that reply, the connection is effectively finished. If the software tries to send another one, the server throws an error because the conversation is already closed.

Normally this wouldn’t happen often. But if it occurs repeatedly, those errors can accumulate and cause servers to fail.

And that’s exactly what happened.

It appears the issue was triggered by Google’s web crawler, which was sending a variety of unusual requests to the site. Those requests exposed a hidden bug in our code that had probably been sitting quietly there for some time.

Once the problem was identified, the fix was straightforward and has now been deployed.

The interesting part of the story is how quickly the issue was diagnosed. Debugging problems like this can often take hours of searching through logs and code. In this case, AI helped highlight the likely cause almost immediately.

It’s a small example of how AI is starting to act as a useful assistant for engineers, helping identify problems faster and keeping services running smoothly.

Over the past few months we’ve received hundreds of individual pieces of feedback on AskTrip answers. Around 15% were low ratings. That might sound worrying, but I actually find the low scores the most valuable.

Why? Because they’re actionable.

People who are dissatisfied are far more likely to tell you about it, so the 15% is likely to be an overestimate of overall dissatisfaction. But each low score comes with something far more useful than a number: a clue about where the product isn’t meeting expectations. And when you look across hundreds of these, clear patterns start to emerge.

Here are the main things we learned.

One of the most common frustrations wasn’t that the information was wrong – it was that it drifted.

A clinician might ask a very specific question (a particular population, drug comparison, route, or clinical dilemma), but the answer sometimes broadened into a more general discussion of the topic.

Interesting? Yes.

Helpful for a decision? Not always.

The lesson for us is simple: relevance beats comprehensiveness. Staying locked onto the exact clinical question matters more than covering the wider subject area.

Another pattern was what I think of as “EBM wallpaper” – answers that looked polished and evidence-based but were built on thin or indirect evidence.

Users don’t just want citations. They want honest calibration:

In other words, clinicians value honest uncertainty more than polished narrative.

Sometimes there is no directly relevant research – or the question uses a term that isn’t recognised in the evidence.

In these situations, the risk for AI is to be “helpful” by filling the gap with general advice, assumptions, or plausible definitions. That can create confident answers that aren’t actually evidence-based.

Our approach will be different. When evidence is missing or uncertain, AskTrip will:

Sometimes the most helpful response isn’t a longer answer — it’s helping you ask the next, better question.

Interestingly, the feedback wasn’t all about making answers shorter or tighter.

Around one third of users told us the opposite – they’d like longer, more detailed answers.

This highlights something important: clinicians use AskTrip in different ways. Some want a quick, decision-focused summary. Others want to explore the underlying evidence in depth.

So the challenge isn’t simply length – it’s flexibility.

This feedback isn’t just interesting – it’s directly shaping the next phase of AskTrip.

We’re actively working on two key improvements.

1. Better-calibrated answers

We’re refining how answers are generated so that they:

2. A redesigned answer format

We’re moving toward a structure that supports different user needs:

In short:

Short by default. Deep on demand.

It’s easy to focus on average ratings or overall satisfaction. But the most useful feedback often comes from the edges, the cases where we didn’t meet expectations.

Those low scores aren’t failures. They’re signals.

And if we listen carefully, they help us do what AskTrip is designed to do in the first place:

Turn evidence into answers that clinicians can actually use – clearly, honestly, and at the level of detail they need.

I introduced the idea of chunking in the post HTML Scissors towards the end of last year. Since then we’ve been working on delivering on the promise and things are starting to come online. Before expanding on that, I’ll restate the problem…

A significant element of how we order Trip search results is how relevant the search terms are to the documents in our index – and this is strongly influenced by term density: the more a document is focused on the topic, the higher it is likely to rank.

However, this creates an important problem.

Take a clinical guideline on asthma. It might be 10,000 words long, with a 1,000-word section devoted to diagnosis. That section is highly relevant to a search for asthma diagnosis. But across the document as a whole, only 10% of the content relates to diagnosis. From a search engine’s perspective, the topic is relatively diluted; so the guideline may be judged less relevant and appear lower in the results than shorter documents that focus entirely on diagnosis.

In other words, long, high-quality documents can be penalised simply because their relevant content is spread thinly.

So, we’re starting to work with chunking – cutting long documents into smaller, coherent elements. These chunks are appearing live in the Trip results and we’re getting quite excited! We haven’t ironed out all the issues yet, but using the technology live is the only way we’ll refine and improve it.

An example search that highlights chunking

A search for Meningococcal Chemoprophylaxis reveals the following top result:

A few things to point out:

The document title is Guidance for public health management of meningococcal disease in the UK and we have added Chemoprophylaxis in Healthcare Settings (Detailed) ‒ Chemoprophylaxis Recommendations in Healthcare Settings. As we chunk we assign a chunk title to sit alongside the actual title. Whether this continues to be displayed is an ongoing debate.

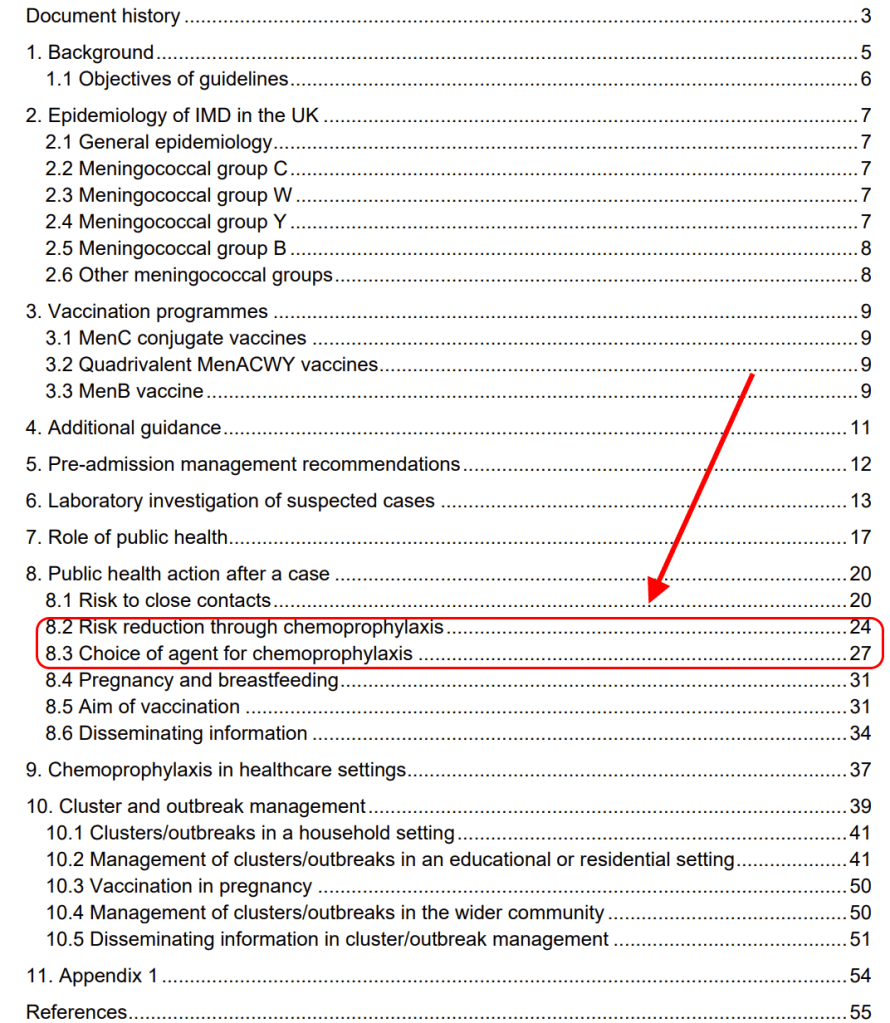

If you look at the the documents index:

You will see that only 6 pages (pages 24–30) are about chemoprophylaxis — less than 10% of the 63-page document. As a result, the document as a whole would score relatively low for this topic and would be unlikely to appear near the top of the results, even though those six pages are highly relevant.

By treating those pages as a separate unit, the content becomes highly concentrated on chemoprophylaxis — increasing its term density and allowing it to rank much more appropriately for the search.

In short, chunking helps Trip find the relevant part, not just the relevant document.

That means long, authoritative sources are no longer penalised for covering multiple topics – and clinicians are more likely to see the evidence they need, faster.

We’re just getting started, and your searches will help us make it better.

Quiet changes like this don’t always get noticed – but they make a real difference to turning research into practice.

In the previous post (Turning Research Into Practice – Except When the Research Isn’t There), we showed that nearly half of real clinical questions asked through AskTrip sit in areas with only moderate or limited evidence. These are not marginal issues – they are everyday decisions affecting millions of patients.

If we take those questions seriously, the obvious next step is to ask:

What should we actually research next?

Rather than starting from theory, funding trends, or academic fashion, we start from real clinical uncertainty. In other words, from the questions clinicians are already asking, repeatedly, because existing evidence does not give them confident answers.

For this exercise, we prioritised questions using two simple criteria:

We assume, following standard health policy principles, that:

Using those assumptions, a small number of clear research priorities emerge directly from the AskTrip data.

A caveat is important: we have not independently verified the full evidence base for each question. Some may already have better answers than are reflected here. This represents an initial, data-driven approach to research prioritisation based on clinical uncertainty, which we intend to refine through ongoing evidence review.

Research question:

In critically ill adult patients, do GLP-1 receptor agonists improve glycaemic control and clinical outcomes compared with standard insulin-based management?

Why this matters:

If new evidence shows GLP-1 drugs improve ICU glycaemic control or outcomes, this could significantly benefit many hospitalised diabetics (high incidence, high severity). ICU patients with uncontrolled diabetes face organ damage risks, so a positive finding would be high impact per patient.

Research question:

In patients with COPD, does pulmonary rehabilitation reduce the incidence of recurrent pneumonia compared with usual care?

Why this matters:

COPD is a prevalent chronic disease. Demonstrating that pulmonary rehabilitation (or other intervention) lowers pneumonia risk could reduce hospitalisations and mortality in a large population. Even a moderate reduction in pneumonia incidence would scale to many lives saved, given COPD’s prevalence. This combines moderate per-person effect with large population benefit.

Research question:

Among patients requiring opioid analgesia, which opioid is associated with the lowest incidence of clinically significant constipation and treatment discontinuation?

Why this matters:

Opioids are widely used across many conditions. Identifying the least constipating (or safest) opioid could improve quality of life for countless patients. We assume this is important as opioid-induced constipation is a common, burdensome side effect.

Research question:

In patients with postural orthostatic tachycardia syndrome (POTS), does gabapentin improve symptoms and functional outcomes compared with placebo or standard management?

Why this matters:

POTS is relatively rare but can be severely disabling. Proving efficacy (or not) of gabapentin would directly change care for those patients (high individual impact), even if the population is smaller.

Research question:

In older adults, do simple behavioural or environmental interventions (such as caffeine reduction) reduce the incidence of falls compared with usual care?

Why this matters:

Falls in older adults cause major morbidity. If simple interventions can reduce falls, small individual benefits could prevent serious injuries at the population level. This merits research given the high burden of falls, even if any single intervention has a modest effect.

Research questions:

In preterm infants, what is the optimal timing for feeding tube placement to maximise growth and minimise complications?

In infants, does early introduction of allergenic foods reduce the long-term risk of food allergy compared with delayed introduction?

Why this matters:

These address early-life interventions with potentially lifelong consequences. Even small nutritional or developmental improvements can drastically affect a child’s trajectory, making these high impact for individuals despite smaller population sizes.

Research questions:

In veterans with PTSD, which psychological therapies produce sustained functional improvement?

In autistic individuals, which interventions improve long-term quality of life and independence?

Why this matters:

Mental health conditions account for large disability burdens. Better evidence here could be transformative for patients and families.

Research questions:

Which asymptomatic populations benefit from routine screening, and at what intervals?

What vaccination schedules maximise population-level benefit while minimising harm and resource use?

Why this matters:

These questions shape national guidelines and affect very large populations. Even minor changes in evidence can influence millions of clinical decisions.

Systems like AskTrip do not just answer questions – they reveal where the research system itself is failing.

In the end, the most important research questions are not the ones that sound exciting, but the ones clinicians keep asking because no one has ever given them a reliable answer.

This is a follow-up post to What 10,000 Clinical Questions Tell Us About Evidence, Practice, and Uncertainty. Evidence-based medicine promises that clinical decisions should be grounded in high-quality research. Over the past three decades, enormous effort has gone into building guidelines, systematic reviews, and trial infrastructures to make this possible.

But what does the landscape of evidence actually look like when you step away from theory and look at the questions clinicians really ask?

We recently analysed 10,000 real clinical questions submitted to AskTrip and filtered those rated as having only moderate or limited evidence. These are not obscure or academic questions – they are everyday problems arising in routine practice.

Nearly half of all questions fell into this category.

What emerged was not random noise, but a remarkably coherent map of where medical evidence runs thin.

These questions are not poorly formed. They are not “bad questions”. They are often precisely the right questions to ask.

The problem is that they sit in parts of medicine where strong evidence is structurally hard to generate.

This is not a failure of individual clinicians. It is a feature of how medical knowledge is produced.

One of the largest clusters involves long-term conditions:

Typical questions are not about whether treatments work in principle, but about how best to use them in real people:

These are exactly the questions that RCTs are worst at answering. Trials usually study single diseases in isolation. Real patients rarely oblige.

Another strong theme is prevention:

These questions often involve modest interventions with potentially large population effects.

For example:

These are difficult to study, context-dependent, and rarely funded at scale – yet they shape huge amounts of morbidity.

Mental health and neurological conditions form a disproportionate share of weak-evidence questions:

These areas are methodologically hard:

The result is that clinicians repeatedly ask questions where guidance exists, but confidence does not.

Another dominant pattern is questions about people who are routinely excluded from trials:

These questions matter because they involve:

They are also exactly the patients for whom evidence is most limited.

Some of the most revealing questions are not about diseases at all, but about systems:

These questions expose a deeper problem: much of modern medicine is built on historical practice, professional culture, and institutional inertia rather than direct evidence of benefit.

We also found many near-identical questions asked by different clinicians.

Not because the questions were trivial – but because the same uncertainties arise independently across contexts.

This is important.

Duplication is not redundancy. It is a signal.

It is the clinical equivalent of multiple sensors all detecting the same fault line.

If you take this dataset seriously, it suggests a very different research agenda.

Not driven by:

But by:

In other words: research priorities defined by real uncertainty, not academic fashion.

The most uncomfortable finding is this:

Evidence-based medicine works best in exactly the situations where it is least needed.

It works worst in:

These are the situations that dominate real clinical work.

Systems like AskTrip do something unexpected.

They don’t just answer questions.

They reveal:

At scale, this becomes something new:

a live, evolving map of medical uncertainty.

Not a failure of medicine – but a diagnostic tool for the research system itself.

If medicine is serious about “turning research into practice”, it also has to confront the inverse problem:

turning practice into research.

The 10,000 questions are not just a product.

They are a dataset that quietly answers one of the hardest questions in healthcare:

What don’t we actually know – and who is paying the price for that ignorance?

And the answer, increasingly, is:

almost everyone.

Recent Comments