Hot on the heels of us releasing our guideline score we’re releasing our RCT score. We’ve been working with the wonderful RobotReviewer team for years now and one of their products is a Risk of Bias (RoB) score for RCTs. We introduced it in 2016 where we classified all the trials into categories of ‘low risk of bias’ and ‘high/unknown risk of bias’. When we recently re-wrote the site we did not immediately include the RoB score. In part this reflects that, since 2016, the thinking and technology has developed considerably. So, we’re very pleased to reintroduce it to the site.

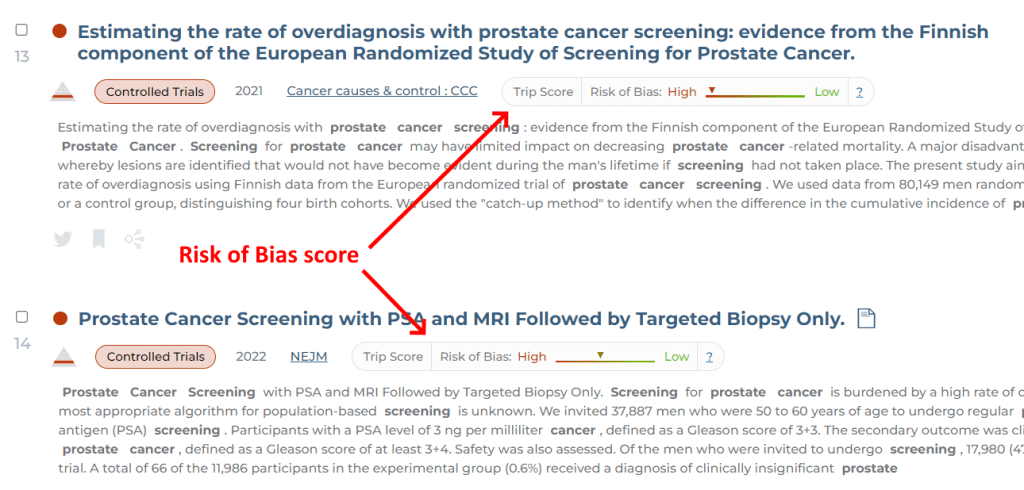

The new score does not categorise the RoB into ‘low’ or ‘high/unknown’, it gives a score based on the likely RoB on a linear scale. We take that score and transform that into a graphic that is similar to that seen on the guideline score:

RCTs are important in the world of EBM and, as with guidelines, they are not all equally good! This score reflects the likelihood of bias and should help our users better make sense of the evidence base.

Recent Comments