Now the recoding of the site is in the past we’re looking forward to adding new features to the site and improving the quality of what is already there. This latter was brought into focus by a strong, but fair, email about issues with Trip. Perhaps there was an element of complacency on our part (subscribers are increasing, we had the rewrite under our belt and little negative feedback). Perhaps we took our eye off the ball. So, it was a great email to receive – removing the complacency and reminding us that quality is really important.

So, what have we done and/or are doing in the short-term:

- Mentioned in the last blog we’re working on a better de-duplication system. There are a small, but significant, number of duplicate articles which we will soon be removing [Work starting next week]

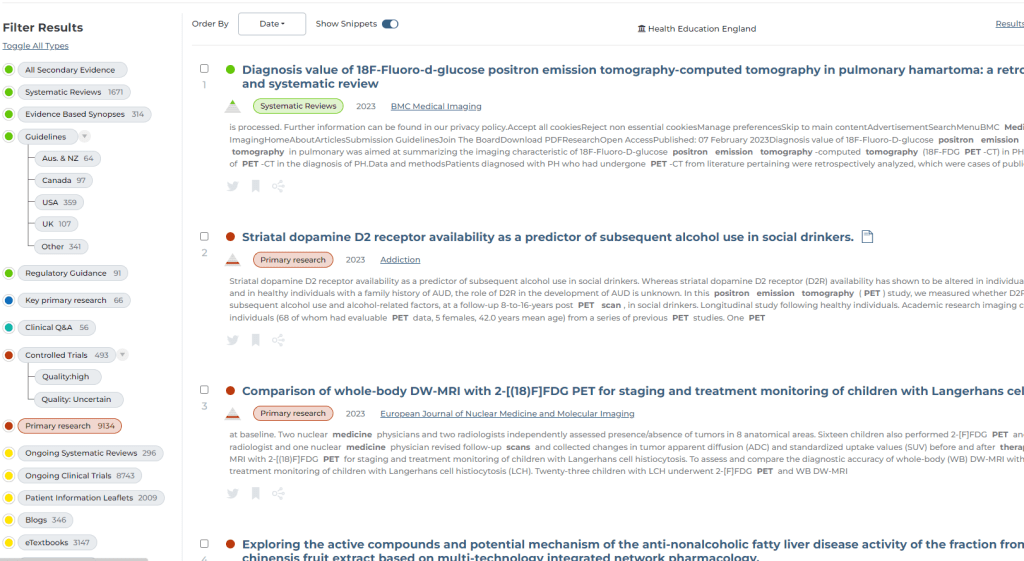

- Dual categories – another blog post highlighting some articles are classified as, say, a ‘systematic review’ and ‘primary research’. This has now been fixed! [Fixed]

- Mis-classified results – linked to the above, some articles were appearing in the wrong filter. So, a user might select a ‘guideline’ and be shown a ‘systematic review’! [Work starting next week]

- Covid-19 – I really dropped the ball here! At the start of Covid-19 we tried to keep up with things, which meant some shortcuts were taken. To speed up the synonym aspect we hard-wired certain terms. This meant we avoided the main synonym function and resulted in early articles having their title words being double-counted – so early documents (particularly those mentioning ‘coronavirus’) were put at the top of the results. In the early days this wasn’t an issue but became problematic as time rolled on. This has now been fixed! [Fixed]

- Ongoing systematic reviews and ongoing clinical trials. Our results are ordered by 3 main elements (1) Relevancy (2) Date and (3) publication score. So, we had a situation where, due to the sheer volume of ongoing trials and reviews (all with high relevancy to the search terms and often many in the last few years) they had started to dominate the results. So, what we have done is to significantly reduce the other element (publication score) with a view to pushing these results down. This has required a complete reindex of the site and this should hopefully be finished early next week [Ongoing].

So, lots has happened and lots more happening next week. Including a visit to the senders of the email. I suspect I’ll have another few things to think about after that!

But, if you’re reading this and think there are other problems with the site please, please, please reach out to me directly jon.brassey@tripdatabase.com and let me know. We’ll hopefully make the site better for you and your fellow users…

Recent Comments