The production of guidelines is a complex task and there are a multitude of methods, some more rigorous than others. While Trip places guidelines at the top of the evidence pyramid we need to recognise this is an over simplification . Our guideline score is designed to help our users understand how robust a guideline might be.

The guideline score has been a concept we’ve explored for a number of years (eg Quality and guidelines from 2019 and Grading guidelines from 2020) and involves us scoring each publisher (not individual guideline – see limitations below) based on 5 criteria:

- Do they publish their methodology? No = 0, Yes = 1, Yes and mention AGREE (or similar) = 2

- Do they use any evidence grading e.g. GRADE? No = 0, Yes = 2

- Do they undertake a systematic evidence search? Unsure/No = 0, Yes = 2

- Are they clear about funding? No = 0, Yes = 1

- Do they mention how they handle conflict of interest? No = 0, Yes = 1

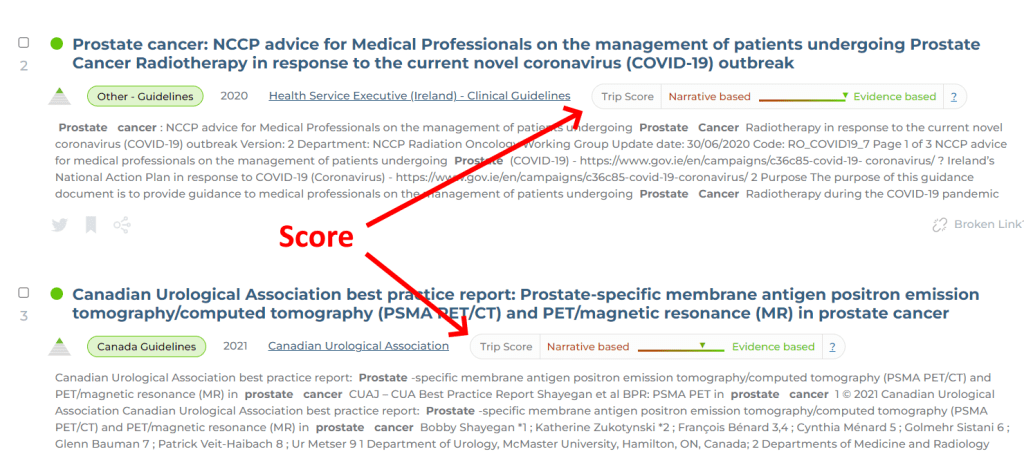

The highest score being 8. Our work has shown that the above results give a very good approximations to the more formal methods, hence we’re using this simpler approach. And this is what it looks like:

Limitations

This approach has a number of issues, for instance:

- It is carried out at the publisher level and was done at a certain date. So, if we scored things in 2021 the scoring covers guidelines produced by the publisher in 2014 (say) and 2023. The methodology might well have changed between those dates. This is not reflected in our scoring.

- Linked with the above point, it assumes the guideline publisher uses the same methodology for all guidelines.

- Many of the lowest scoring producers do so due to the lack of publication of their methodologies making it impossible to properly score them, so our approach may underestimate the rigour of the methodology. If our approach encourages publishers to be more transparent then it’ll be a great result in itself!

- The scoring system uses 5 elements, it might benefit from more but we have to pragmatically balance rigour and resource.

May 9, 2023 at 3:32 pm

The improve method is good and could help in a research paradigm

LikeLike

May 10, 2023 at 5:53 am

Even with the aforementioned limitations, even without assigning a score, your tool is an excellent guideline for quick and effective critical thinking.

Thank you very much for your work. I will use it

LikeLike