This post starts with an apology to Douglas and Hywel at the Centre for Evidence Based Dermatology up at Nottingham, UK. Our analysis of dermatology Q&As should have been finished early last year. No excuses really, other than my inability to write papers!

Regular readers of the blog will know I’m very interested in better ways of procuring research, be it primary research (e.g. clinical trials) or secondary research (e.g. systematic reviews). In answering real questions from front-line clinicians the ideal is to offer good solid research. Unfortunately, all too often, the evidence is either not there or of poor quality.

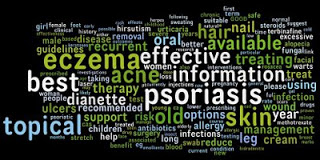

My experience (of answering over 10,000 clinical questions) is that all too often the research isn’t particularly focussed/designed to answer clinicians questions. It was one of my reasons for getting involved in DUETs and for creating the Tag Cloud of Clinical Uncertainty. The idea behind both these initiatives is to highlight gaps in the research with a view to improving the way research is commissioned.

One approach we’re using (to highlighting gaps in the evidence) is to analyse the clinical questions we’ve answered and to look for themes. In this instance we’re matching real questions against the existing research to see how good that is. I’m involved in the NLH’s specialist library for skin disorders so selecting that area seemed sensible. We decided to analyse all the clinical questions ATTRACT and the now defunct NLH Q&A Service have answered over the years. In total that’s 357 questions from primary care health professionals, the vast majority being general practitioners.

One ‘quick win’ is to place all the questions (just the Q’s not the answer text) into wordle and see what we get. There’s a thumbnail below but you can see the full cloud by clicking here. Certain junk words have been removed but that’s mainly to enhance impact. This type of visualisation is so powerful in visualising themes.

May the rest of the analysis move quickly from now on!

Leave a comment