The work on our automated system has taken around two years and much of our work has focused on that. But now it’s time to look back at the main purpose of Trip, to help health professionals find answers to their clinical questions!

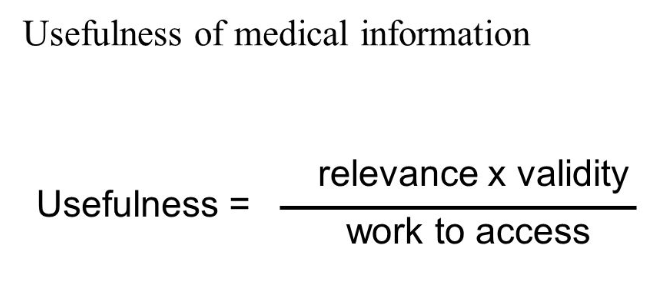

This lovely equation is taken from Shaughnessy and Slawson’s work on Information Mastery:

So, each component is important, assuming you’re interested in useful clinical information:

- Relevance – how relevant is the information for the clinical question?

- Validity – how robust is the information? Is it based on good or weak evidence?

- Work to access – do you get the answer quickly or does it take a long time?

So, for a given question Trip needs to maximise ‘relevance’ and ‘validity’ and reduce ‘work’.

Relevance: For a given set of search terms (which is how the searcher represents their clinical Q) we need to ensure the more useful documents are returned. We’re working on a new algorithm which should be boosted by learning about previous searches. This should improve relevance of results.

Validity: Our algorithm (current and future) will always favour higher-quality content. But we need to be aware that high-quality content is the tip of the evidence pyramid – it’s more robust but there’s lot less of it, so it answers fewer questions.

Work: Our search is quick and if we boost relevance then there are fewer articles for health professionals to look through. However, search returns articles likely to answer the Q, it doesn’t directly answer the Q. We’ve explored this with the answer engine but that is hampered by limited scalability. So, how can we reduce the work? If we can predict the question can we highlight the likely passage from the top articles so the user can immediately see that answer and/or judge the relevance of the document? This seems like a rich seam to mine.

So much to think about…

Leave a comment