We’re keen to help users use the best quality evidence to inform their decisions. While we use the pyramid to help express the hierarchy of evidence there is a danger of that being too simplistic. For instance, not all systematic reviews are high-quality and some are, frankly, terrible.

We have been working on quality scores for RCTs and guidelines for some time and these should both be released by early 2023. However, of equal importance, is scoring systematic reviews. Given Trip covers hundreds of thousands of systematic reviews, any tool we introduce needs to be automated. Well, we’ve taken the first tentative steps…

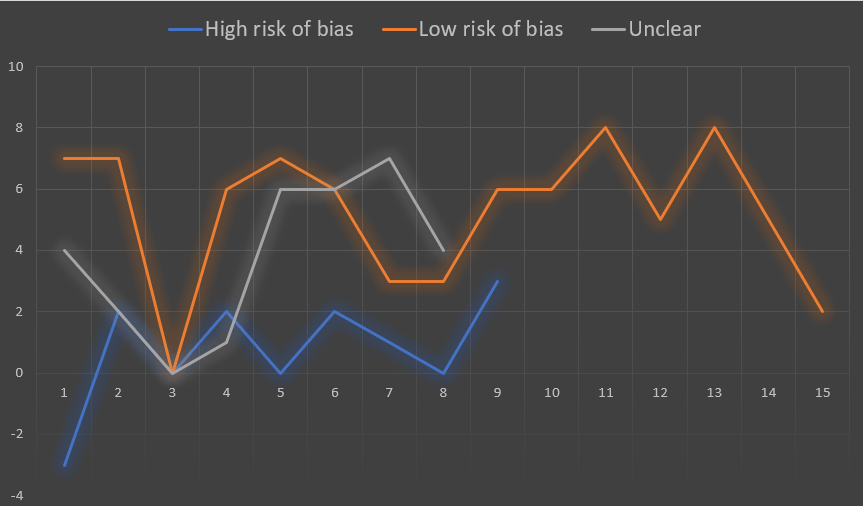

We have devised a scoring system, capable of automation, and trialled this on a sample of 32 systematic reviews. We knew the assessments of the 32 prior to starting and the scoring was done by a 3rd party and was freely available data on the web (using ROBIS the scores were low, high or unclear risk of bias). We then correlated our scores against the ROBIS scores and this is what the graph looks like:

The Y-axis is our score (range from -3 to 8) and the x-axis is simply the number of the systematic review (so 15 were graded as low risk of bias, 9 as high risk of bias and 8 as unclear).

For a first attempt the results are impressive and shows the validity of the approach. The average score per risk of bias category is as follows:

- Low – 5.3

- Unclear – 3.75

- High – 0.78

We clearly need to spend more time on this trying to understand why, for instance, the 3rd ‘low risk of bias’ systematic review scored so low in our system. But there’s time for that, time to adjust weighting, possibly add or remove scoring elements.

Bottom line: we’re well on the way to rolling out an automated systematic review scoring system that can help Trip users make better use of the evidence we cover

2 Pingback