Fascinating technology and we’re continuing to explore its use. One focus is on auto-summarising documents and so far it’s pretty good/interesting (with the large caveat that it can be problematic and hallucinate)! And there are two examples (below), generated from https://chat.openai.com/:

- Extract the main recommendations from a SIGN guideline

- Summarise the methods section of a Cochrane review

The first response seems reasonably good. However, the second one was problematic. I tried it a few times – more around the wording – to get it to ‘correctly’ summarise. A few times it suggested the document had nothing to do with cranberry juice and UTIs! Also, on one occasion it stuck to summarising the methods section whereas, in the example below, it went further!

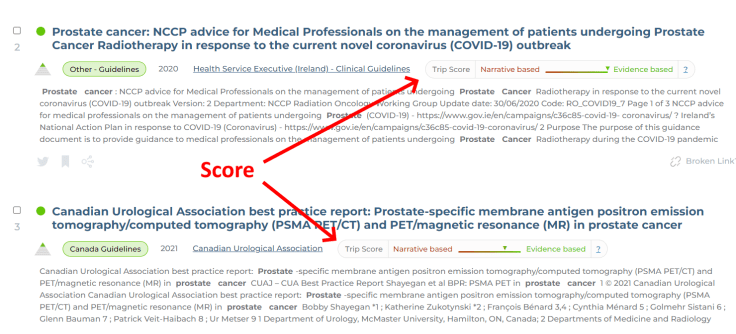

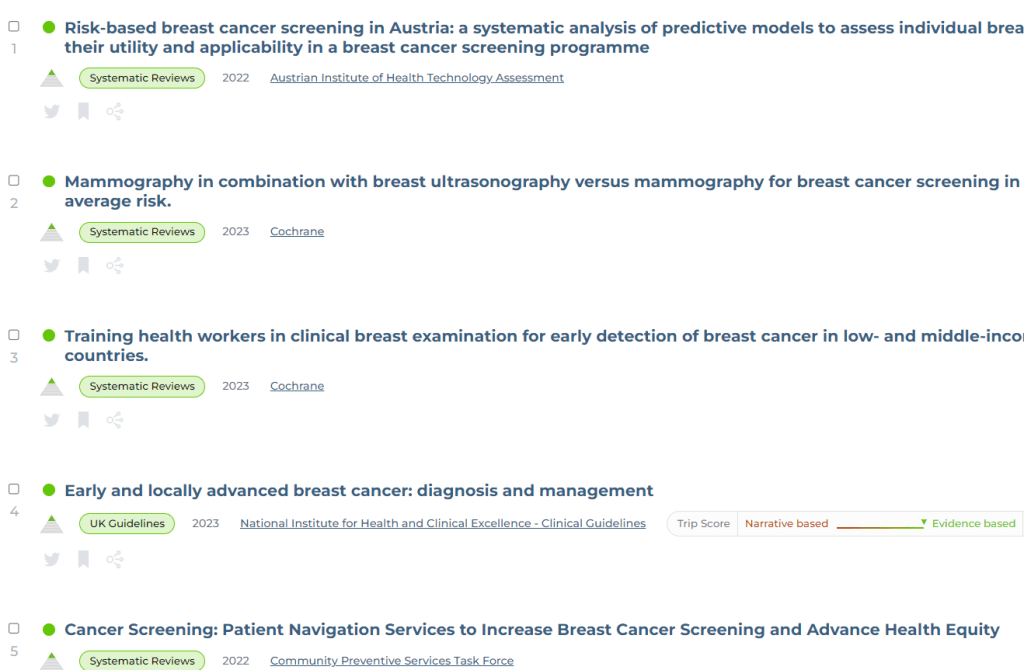

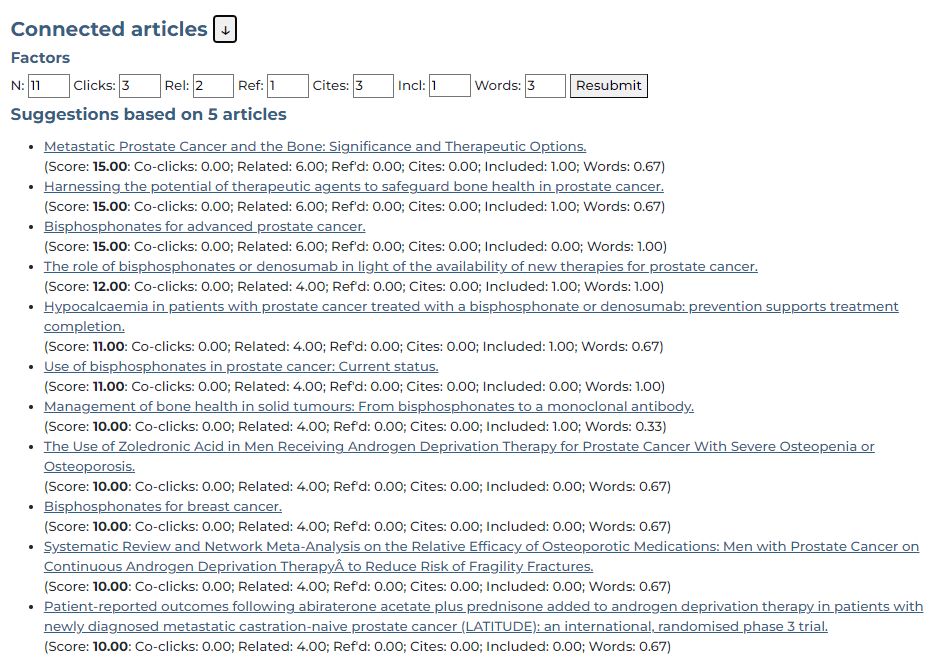

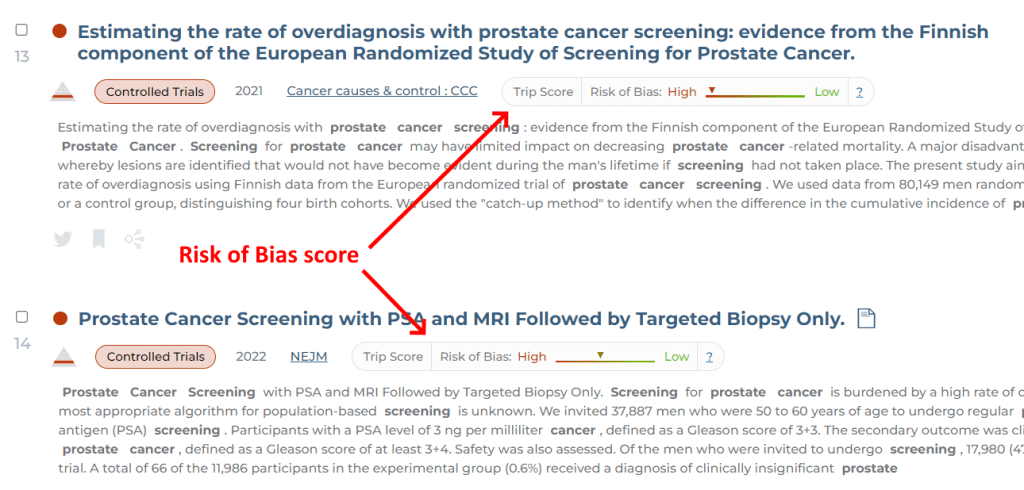

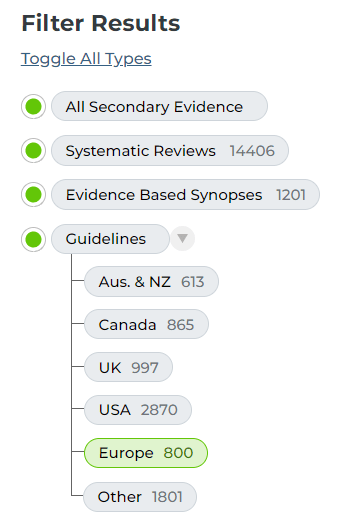

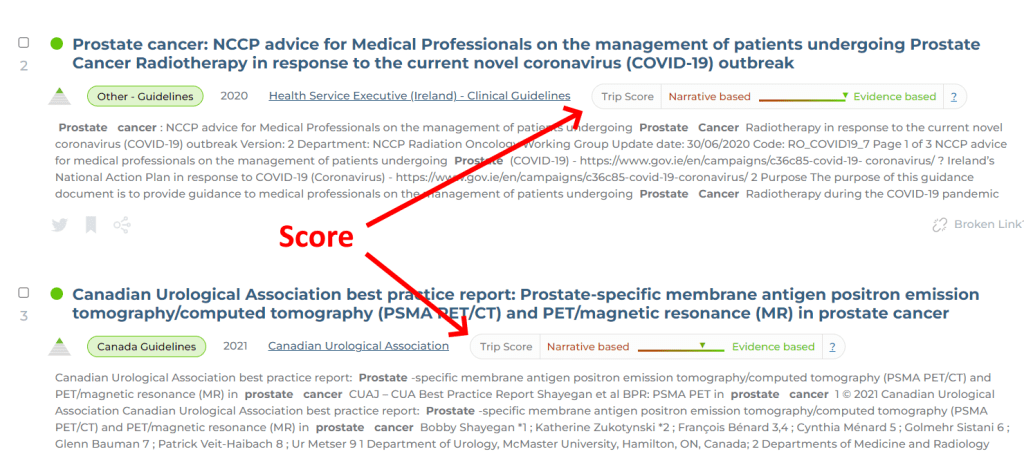

Our first use case is to use it to summarise long documents into abstracts. Initially this would just be used by our search index and it’d allow us to modify our search to default to ‘title and abstract’. Currently our default is ‘title and body of the document’ which, for long documents, is particularly sensitive returning low relevancy documents. For instance, a document on measles, which is 100,000 words long mentions prostate cancer one time. This means it is returned for a search on prostate cancer! This is normally fine as it’d be low down in the results. However, if you filter the documents to a single area (eg UK guidelines) where there are few documents then suddenly the low relevancy results can be seen on the first page of results. A summary of the document would not include prostate cancer and would therefore not be returned in the results!

The second use case would be to allow users to see the summary, helping them decide if it was useful or not. There are issues around copyright to consider….

We’re having some fun with this technology and we’re happy to hear from you if you have any suggestions as to how Trip could use it to good effect!

Extract the main recommendations from a SIGN guideline

Prompt: Can you extract the background and main recommendations from this document https://www.sign.ac.uk/media/1987/sign-164-eating-disorders-v2.pdf

Response: Yes, I can extract the background and main recommendations from the document you provided.

Background:

The document is a guideline developed by the Scottish Intercollegiate Guidelines Network (SIGN) on the assessment and management of eating disorders. It provides recommendations based on the best available evidence for healthcare professionals involved in the care of individuals with eating disorders.

Main recommendations:

The document provides recommendations for the assessment, treatment, and management of eating disorders, including anorexia nervosa, bulimia nervosa, binge eating disorder, and other specified feeding or eating disorders. Here are some of the main recommendations:

Assessment: A thorough assessment of the individual’s physical, psychological, and social needs should be conducted as soon as possible. This includes obtaining a detailed history of the eating disorder, physical examination, and laboratory investigations.

Treatment: A multidisciplinary team approach is recommended for the treatment of eating disorders. The team should include a range of healthcare professionals, such as psychiatrists, dietitians, psychologists, and physicians.

Psychological interventions: Evidence-based psychological interventions, such as cognitive-behavioral therapy, interpersonal therapy, and family therapy, should be offered to individuals with eating disorders.

Nutritional management: Nutritional management should be tailored to the individual’s needs and preferences. A dietitian should be involved in the development and implementation of the nutritional plan.

Pharmacological interventions: Medication should only be used in conjunction with psychological and nutritional interventions. The use of medication should be carefully monitored, and the benefits and risks should be weighed.

Monitoring and follow-up: Individuals with eating disorders should receive ongoing monitoring and follow-up care. This includes regular physical and mental health assessments, as well as ongoing support from the multidisciplinary team.

Overall, the document emphasizes the importance of a collaborative, evidence-based approach to the assessment and management of eating disorders.

Summarise the methods section of a Cochrane review

Prompt: Can you summarise the methods section, as bullet points, from this document https://www.cochranelibrary.com/cdsr/doi/10.1002/14651858.CD001321.pub6/full

Response:

- This Cochrane systematic review aimed to evaluate the effectiveness of cranberry products in preventing urinary tract infections (UTIs) in people of all ages.

- The review included 28 randomized controlled trials (RCTs) involving a total of 4,473 participants, who were mostly women.

- The studies compared cranberry products (juice, capsules, tablets, or other formulations) to placebo, no treatment, or other interventions for preventing UTIs.

- The primary outcome was the incidence of symptomatic UTIs, defined as the presence of at least one urinary tract symptom (such as pain, burning, or frequency) and the presence of at least 10^5 colony-forming units (CFU) of bacteria per milliliter of urine.

- The secondary outcomes included the incidence of asymptomatic bacteriuria (the presence of bacteria in the urine without symptoms), the incidence of UTIs caused by specific bacteria (such as E. coli), adverse events, and compliance with the interventions.

- The review used standard Cochrane methods for searching, selecting, and assessing the quality of the studies, and for synthesizing the data using meta-analysis.

- The quality of the evidence was assessed using the GRADE approach, which takes into account factors such as study design, risk of bias, inconsistency, imprecision, and publication bias.

- The review found that cranberry products may slightly reduce the incidence of symptomatic UTIs compared to placebo or no treatment, but the effect was small and of uncertain clinical significance.

- The absolute reduction in UTIs ranged from 0% to 13%, depending on the type and dose of cranberry product, the population studied, and other factors.

- The review did not find evidence of a difference in the incidence of asymptomatic bacteriuria or adverse events between cranberry and control groups.

- The review concluded that cranberry products cannot be recommended as a routine prophylaxis for UTIs, but may be considered as a non-pharmacological option for some women who are motivated to try them and understand the limitations of the evidence.

Recent Comments